The ROI of Real-Time Machine Data: Why a 5-Minute Data Lag Costs You Thousands

There's a question that every manufacturing executive should be asking but few actually do: How much does data latency cost us?

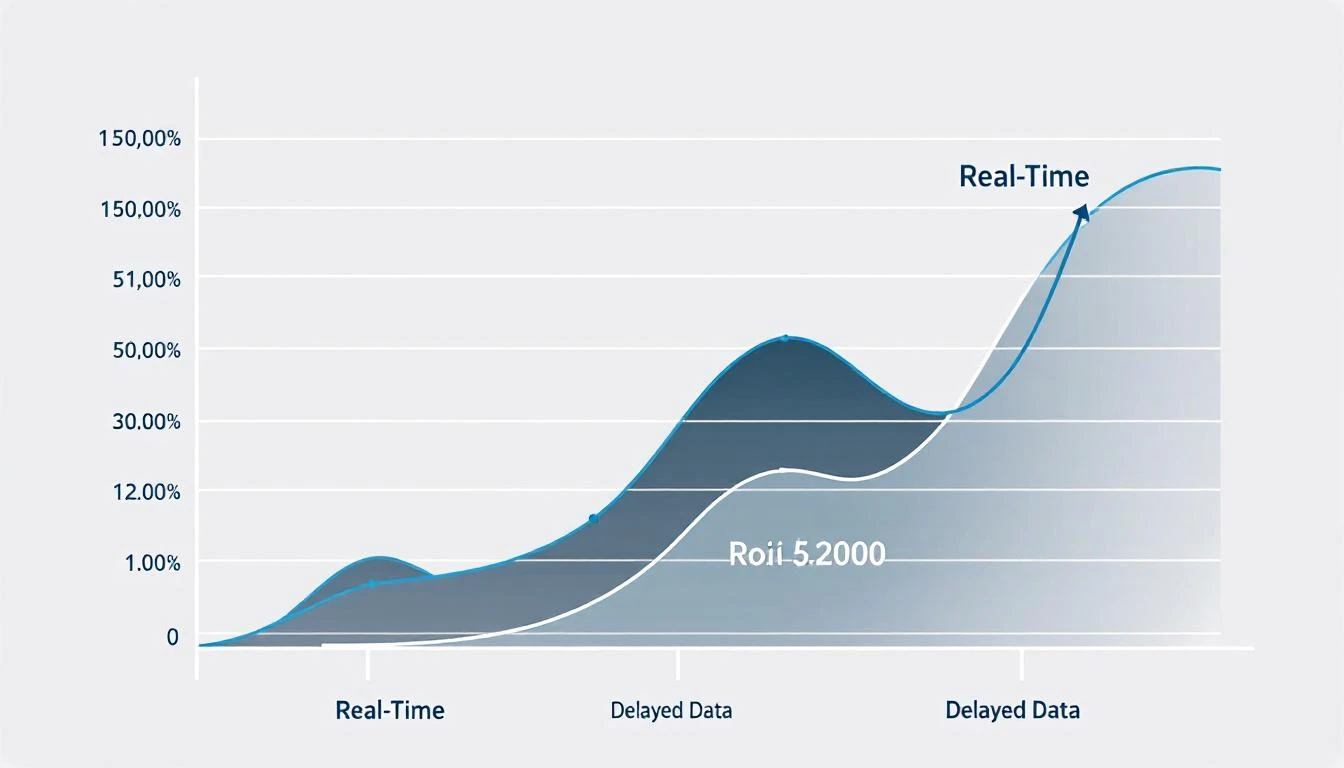

Not "do we have data?" — most factories have some form of production reporting. The question is about when that data reaches the people who can act on it. The difference between a 2-second alert and a 5-minute update isn't a technical detail. It's the difference between catching a $200 problem and cleaning up a $20,000 disaster.

This article quantifies that difference. We'll look at what happens at each level of data latency — real-time, near-real-time, delayed, and batch — and show exactly where the money goes when your data lags behind your machines.

The Data Latency Spectrum

Every manufacturing data system falls somewhere on this spectrum:

Tier 1: Real-Time (0–5 seconds)

Data appears in dashboards and alerts within seconds of the physical event on the machine. A temperature spike at 10:32:15 triggers an alert at 10:32:17.

Examples: Protocol-native IIoT platforms reading PLC tags (MachineCDN), hardwired SCADA systems, direct HMI connections.

Tier 2: Near-Real-Time (5–60 seconds)

Data is collected continuously but processed and displayed at intervals. A temperature spike at 10:32:15 appears in the dashboard at 10:32:45.

Examples: Cloud-based IIoT platforms with edge buffering, IoT sensor platforms with polling intervals.

Tier 3: Delayed (1–15 minutes)

Data is polled or uploaded periodically. A temperature spike at 10:32:15 might not appear until 10:45:00.

Examples: Some cloud platforms with aggregation layers, manual data entry systems with periodic sync, MES systems with batch uploads.

Tier 4: Batch (hourly, per-shift, or daily)

Data is collected during production and reviewed later. A temperature spike at 10:32 AM appears in the end-of-shift report at 4:00 PM — or in the daily report the next morning.

Examples: Shift reports, manual inspection logs, historian-based reporting with periodic queries, spreadsheet-based tracking.

The Cost Model: What Each Latency Tier Actually Costs

Let's work through a concrete scenario that every manufacturer can relate to: a bearing failure developing on a critical production machine.

The Scenario

A critical injection molding machine starts developing a bearing failure. The early indicator is a 15% increase in motor current draw, followed by a gradual temperature rise, followed (3 hours later) by catastrophic bearing seizure.

Here's what happens at each data latency level:

Tier 1: Real-Time Detection (0–5 seconds)

What happens:

- 10:32 AM: Motor current increases 15% above baseline

- 10:32 AM: Real-time alert fires to maintenance engineer's phone

- 10:45 AM: Maintenance engineer checks vibration and current data trends remotely

- 11:00 AM: Planned shutdown for bearing replacement during next changeover

- 11:30 AM: Bearing replaced in 45 minutes during scheduled changeover

- 12:15 PM: Machine back in production

Cost:

- Machine downtime: 45 minutes (during planned changeover — no production loss)

- Bearing cost: $350 (standard replacement part, in stock)

- Maintenance labor: 1 hour at $65/hour = $65

- Production loss: $0 (repair during changeover)

- Total cost: ~$415

Tier 2: Near-Real-Time Detection (5–60 seconds)

Outcome: Essentially the same as real-time for this scenario. A 30-second delay on an alert that gives you 3 hours of lead time before failure is immaterial. The cost is still ~$415.

This is worth noting: for developing mechanical issues with hours of lead time, near-real-time is nearly as valuable as true real-time.

Tier 3: Delayed Detection (1–15 minutes)

What happens:

- 10:32 AM: Motor current increases (undetected)

- 10:45 AM: Data refresh shows current spike in dashboard

- 11:00 AM: Maintenance engineer notices during routine dashboard check (if they check)

- 11:30 AM: Engineer investigates, confirms developing issue

- 12:00 PM: Decision to replace bearing at next opportunity

- Outcome is similar if someone happens to be watching the dashboard — but delayed alerts mean it might be missed entirely until a more serious symptom appears

Cost: Similar to real-time IF someone is actively monitoring. But the risk is higher — 15-minute delays mean subtle issues can develop further before detection. ~$415–$2,000 depending on response time.

Tier 4: Batch Detection (hourly/shift/daily)

What happens:

- 10:32 AM: Motor current increases (undetected, unrecorded in real-time)

- 12:00 PM: Temperature begins rising (no one notices)

- 1:30 PM: Bearing seizes. Machine stops. Catastrophic failure.

- 1:30 PM: Operator reports machine down

- 2:00 PM: Maintenance investigates, diagnoses bearing failure

- 2:30 PM: Part not in stock — ordered expedited

- Next day: Part arrives. Machine down for 4 hours for repair plus cleanup

- Total downtime: 18+ hours (1:30 PM to next day ~8 AM)

Cost:

- Machine downtime: 18 hours at $500/hour = $9,000

- Emergency bearing replacement (expedited): $750

- Secondary damage (shaft scoring from seized bearing): $2,500 repair

- Maintenance labor (emergency + repair): 8 hours at $95/hour (OT) = $760

- Scrap from interrupted production: $800

- Late delivery penalties (customer order delayed): $2,000

- Total cost: ~$15,810

The data latency premium: $15,395 — 37x more expensive than real-time detection.

Beyond Bearing Failures: Where Data Latency Costs You Money

The bearing example is dramatic but it's just one category. Here are the other scenarios where data speed directly impacts cost:

Quality Drift

The problem: A process variable (temperature, pressure, speed, fill volume) starts drifting outside optimal range. Every minute it runs off-spec, you're producing marginal or defective product.

Real-time value: Catch drift in seconds, adjust in minutes. Defective parts: 5–20.

Batch value: Discover drift in end-of-shift report. Defective parts produced before discovery: 500–5,000.

Cost difference: At $10–$50 per defective part (material + rework), the gap is $4,900–$249,000 per event.

Changeover Optimization

The problem: Changeovers take 45 minutes on average, but the variation is huge — some take 30 minutes, some take 90 minutes. Without real-time data, you don't know which changeovers are slow, why, or how to standardize.

Real-time value: Every changeover is timed automatically. Operators see their changeover time in real-time. Managers see shift-by-shift, operator-by-operator changeover data. Best practices are identified and standardized.

Batch value: Average changeover time is calculated at the end of the month. You know you're slow but don't know why, when, or who.

Cost difference: A 20% reduction in changeover time (realistic with real-time tracking) on a line doing 8 changeovers per day saves 72 minutes of productive time daily. At $500/hour, that's $219,000 per year per line.

Peak Demand Management

The problem: Your electricity tariff includes demand charges based on your peak 15-minute power draw. Starting multiple large machines simultaneously creates a demand spike that increases your demand charge for the entire billing period.

Real-time value: Monitor aggregate power draw in real-time. Implement staggered startup sequences. Alert when demand approaches peak thresholds.

Batch value: You see the peak demand on your monthly utility bill. Too late.

Cost difference: Demand charges can represent 30–50% of industrial electricity bills. Managing peaks can reduce demand charges by 15–25%, saving $15,000–$50,000 annually for a typical facility.

Downtime Categorization

The problem: You know how many hours of downtime you had, but you don't know the breakdown: how much was planned maintenance, how much was changeovers, how much was material waiting, and how much was equipment failure?

Real-time value: Every stop is categorized as it happens — with duration, machine, shift, operator, and reason code captured automatically. Pareto charts show your top downtime drivers in real-time.

Batch value: Operators fill out downtime logs at the end of shift (if they remember). Categories are approximate. Duration is estimated. Root causes are vague.

Cost difference: According to Deloitte research, manufacturers with real-time downtime categorization reduce unplanned downtime 20–25% faster than those using manual/batch tracking.

The Compound Effect: Why Small Delays Create Big Losses

Data latency doesn't just cost you per-incident — it compounds:

1. Missed Pattern Recognition

A 2°C temperature increase on Machine #7 is noise. A 2°C increase on Machine #7 at the same time every day for 5 days is a pattern — probably a cooling system degradation. Real-time trending catches this pattern. Daily reports bury it in averages.

2. Response Time Amplification

When a problem is detected in real-time, the maintenance engineer responds calmly, checks the data, plans the intervention. When the same problem is discovered after the machine has stopped, the response is reactive — emergency calls, overtime, expedited parts, secondary damage from running equipment to failure.

The same problem, detected at different points in its lifecycle, costs 5–40x more to fix reactively than proactively. McKinsey estimates that reactive maintenance costs 3–9x more than proactive maintenance on average.

3. Knowledge Decay

When an operator investigates a problem in real-time, the context is fresh. They know what they were running, what changed, and what happened. When they try to explain what happened 6 hours later in a shift report, critical details are lost. The root cause analysis suffers. The corrective action is weaker. The problem recurs.

Calculating Your Data Latency Cost

Here's a framework to estimate what data latency is costing your facility:

Step 1: Count your quality events How many times per month do you discover quality issues AFTER significant defective product has been produced? → Multiply by average rework/scrap cost per event = Quality latency cost

Step 2: Measure your reactive maintenance ratio What percentage of your maintenance work orders are emergency/reactive (vs. planned/predictive)? → Industry average: 40–60% reactive. Each reactive event costs 3–9x a planned event. → Calculate the premium you're paying = Maintenance latency cost

Step 3: Estimate your downtime knowledge gap How many hours of downtime per month can you NOT attribute to a specific root cause? → Unattributed downtime can't be systematically reduced → Estimate the cost of those hours at your production rate = Visibility latency cost

Step 4: Add your energy waste If you're not monitoring energy per machine in real-time, assume 15–25% waste (based on DOE assessments). → Your annual energy bill × 15% = Energy latency cost

Total data latency cost = Quality + Maintenance + Visibility + Energy

For a typical mid-size manufacturer ($10M–$50M revenue), this number usually lands between $200K and $1.5M per year.

The Path from Batch to Real-Time

You don't have to transform overnight. Here's a practical migration path:

Phase 1: Connect Critical Equipment (Week 1–2)

Deploy protocol-native IIoT monitoring on your 5–10 most critical machines. Get real-time alerts for machine state, temperature, current draw, and production counts. This alone typically pays for itself within the first quarter.

Phase 2: Expand and Standardize (Month 1–3)

Connect remaining production equipment. Standardize dashboards and alerts. Begin tracking OEE, downtime categories, and energy per machine. Train operators and maintenance staff on dashboard use.

Phase 3: Predictive Analytics (Month 3–6)

With 3+ months of historical data, AI-powered platforms can begin predicting failures and quality issues. The same data you collected for monitoring now powers predictive models that prevent problems before they start.

MachineCDN is designed to get you to Phase 1 in an afternoon — not a quarter. The 3-minute device setup and zero IT requirements mean you can go from "no visibility" to "real-time dashboards" in a single shift.

The Bottom Line

Data latency isn't a technical problem. It's a financial problem. Every minute between when something happens on your factory floor and when someone can act on it has a measurable cost — in scrap, downtime, energy waste, and emergency maintenance.

The manufacturers who will win the next decade aren't the ones with the most machines. They're the ones with the fastest data. Real-time visibility turns every machine into a sensor, every operator into an analyst, and every shift into a learning opportunity.

Ready to eliminate your data latency? Book a demo with MachineCDN — we'll connect your machines and show you what real-time manufacturing data looks like. Setup takes 3 minutes. The ROI takes about 5 weeks.