EtherNet/IP and CIP Objects Explained: Implicit vs Explicit Messaging for IIoT [2026]

If you've spent any time integrating Allen-Bradley PLCs, Rockwell automation cells, or Micro800-class controllers into a modern IIoT stack, you've encountered EtherNet/IP. It's the most widely deployed industrial Ethernet protocol in North America, yet the specifics of how it actually moves data — CIP objects, implicit vs explicit messaging, the scanner/adapter relationship — remain poorly understood by many engineers who use it daily.

This guide breaks down EtherNet/IP from the perspective of someone who has built edge gateways that communicate with these controllers in production. No marketing fluff, just the protocol mechanics that matter when you're writing code that reads tags from a PLC at sub-second intervals.

What EtherNet/IP Actually Is (And Isn't)

EtherNet/IP stands for EtherNet/Industrial Protocol — not "Ethernet IP" as in TCP/IP. The "IP" is intentionally capitalized to distinguish it. At its core, EtherNet/IP is an application-layer protocol that runs CIP (Common Industrial Protocol) over standard TCP/IP and UDP/IP transport.

The key architectural insight: CIP is the protocol. EtherNet/IP is just one of its transport layers. CIP also runs over DeviceNet (CAN bus) and ControlNet (token-passing). This means the object model, service codes, and data semantics are identical whether you're talking to a device over Ethernet, a CAN network, or a deterministic control network.

For IIoT integration, this matters because your edge gateway's parsing logic for CIP objects translates directly across all three physical layers — even if 90% of modern deployments use EtherNet/IP exclusively.

The CIP Object Model

CIP organizes everything as objects. Every device on the network is modeled as a collection of object instances, each with attributes, services, and behaviors. Understanding this hierarchy is essential for programmatic tag access.

Object Addressing

Every piece of data in a CIP device is addressed by three coordinates:

| Level | Description | Example |

|---|---|---|

| Class | The type of object | Class 0x04 = Assembly Object |

| Instance | A specific occurrence of that class | Instance 1 = Output assembly |

| Attribute | A property of that instance | Attribute 3 = Data bytes |

When your gateway creates a tag path like protocol=ab-eip&gateway=192.168.1.10&cpu=micro800&name=temperature_setpoint, the underlying CIP request resolves that symbolic tag name into a class/instance/attribute triplet.

Essential CIP Objects for IIoT

Here are the objects you'll interact with most frequently:

Identity Object (Class 0x01) — Every CIP device has one. Vendor ID, device type, serial number, product name. This is your first read when auto-discovering devices on a network. For fleet management, querying this object gives you hardware revision, firmware version, and a unique serial number that serves as a device fingerprint.

Message Router (Class 0x02) — Routes incoming requests to the correct object. You never address it directly, but understanding that it exists explains why a single TCP connection can multiplex requests to dozens of different objects without confusion.

Assembly Object (Class 0x04) — This is where I/O data lives. Assemblies aggregate multiple data points into a single, contiguous block. When you configure implicit messaging, you're essentially subscribing to an assembly object that the PLC updates at a fixed rate.

Connection Manager (Class 0x06) — Manages the lifecycle of connections. Forward Open, Forward Close, and Large Forward Open requests all go through this object. When your edge gateway opens a connection to read 50 tags, the Connection Manager allocates resources and returns a connection ID.

Implicit vs Explicit Messaging: The Critical Distinction

This is where most IIoT integration mistakes happen. EtherNet/IP supports two fundamentally different messaging paradigms, and choosing the wrong one leads to either wasted bandwidth or missed data.

Explicit Messaging (Request/Response)

Explicit messaging works like HTTP: your gateway sends a request, the PLC processes it, and sends a response. It uses TCP for reliability.

When to use explicit messaging:

- Reading configuration parameters

- Writing setpoints or recipe values

- Querying device identity and diagnostics

- Any operation where you need a guaranteed response

- Tag reads at intervals > 100ms

The tag read flow:

Gateway PLC (Micro800)

| |

|--- TCP Connect (port 44818) -->|

|<-- TCP Accept ------------------|

| |

|--- Register Session ---------->|

|<-- Session Handle: 0x1A2B ----|

| |

|--- Read Tag Service ---------->|

| (class 0x6B, service 0x4C) |

| tag: "blender_speed" |

|<-- Response: FLOAT 1250.5 -----|

| |

|--- Read Tag Service ---------->|

| tag: "motor_current" |

|<-- Response: FLOAT 12.3 ------|

Each tag read is a separate CIP request encapsulated in a TCP packet. For reading dozens of tags, this adds up — each round trip includes TCP overhead, CIP encapsulation, and PLC processing time.

Performance characteristics:

- Typical round-trip: 5–15ms per tag on a local network

- 50 tags × 10ms = 500ms minimum cycle time

- Connection timeout: typically 2000ms (configurable)

- Maximum concurrent sessions: depends on PLC model (Micro800: ~8–16)

Implicit Messaging (I/O Data)

Implicit messaging is a scheduled, connectionless data exchange using UDP. The PLC pushes data at a fixed rate without being asked — think of it as a PLC-initiated publish.

When to use implicit messaging:

- Continuous process monitoring (temperature, pressure, flow)

- Motion control feedback

- Any data that changes frequently (< 100ms intervals)

- High tag counts where polling overhead is unacceptable

The connection flow:

Gateway PLC

| |

|--- Forward Open (TCP) ------->|

| RPI: 50ms |

| Connection type: Point-to-Point |

| O→T Assembly: Instance 100 |

| T→O Assembly: Instance 101 |

|<-- Forward Open Response ------|

| Connection ID: 0x4F2E |

| |

|<== I/O Data (UDP, every 50ms) =|

|<== I/O Data (UDP, every 50ms) =|

|<== I/O Data (UDP, every 50ms) =|

| ...continuous... |

The Requested Packet Interval (RPI) is specified in microseconds during the Forward Open. Common values:

- 10ms (10,000 μs) — motion control

- 50ms — process monitoring

- 100ms — general I/O

- 500ms–1000ms — slow-changing values (temperature, level)

Critical detail: The data format of implicit messages is defined by the assembly object, not by the message itself. Your gateway must know the assembly layout in advance — which bytes correspond to which tags, their data types, and byte ordering. There's no self-describing metadata in the UDP packets.

Scanner/Adapter Architecture

In EtherNet/IP terminology:

- Scanner = the device that initiates connections and consumes data (your edge gateway, HMI, or supervisory PLC)

- Adapter = the device that produces data (field I/O modules, drives, instruments)

A PLC can act as both: it's an adapter to the SCADA system above it, and a scanner to the I/O modules below it.

What This Means for IIoT Gateways

Your edge gateway is a scanner. When designing its communication stack, you need to handle:

-

Session registration — Before any CIP communication, register a session with the target device. This returns a session handle that must be included in every subsequent request. Session handles are 32-bit integers; manage them carefully across reconnects.

-

Connection management — For explicit messaging, a single TCP connection can carry multiple CIP requests. For implicit messaging, each connection requires a Forward Open with specific parameters. Plan your connection budget — Micro800 controllers support 8–16 simultaneous connections depending on firmware.

-

Tag path resolution — Symbolic tag names (like

B3_0_0_blender_st_INT) must be resolved to CIP paths. For Micro800 controllers, the tag path format is:protocol=ab-eip&gateway=<ip>&cpu=micro800&elem_count=<n>&elem_size=<s>&name=<tagname>Where

elem_sizeis 1 (bool/int8), 2 (int16), or 4 (int32/float). -

Array handling — CIP supports reading arrays with a start index and element count. A single request can read up to 255 elements. For arrays, the tag path includes the index:

tagname[start_index].

Data Types and Byte Ordering

CIP uses little-endian byte ordering for all integer types, which is native to x86-based controllers. However, when tag values arrive at your gateway, the handling depends on the data type:

| CIP Type | Size | Byte Order | Notes |

|---|---|---|---|

| BOOL | 1 byte | N/A | 0x00=false, 0x01=true |

| INT8 / USINT | 1 byte | N/A | Signed: -128 to 127 |

| INT16 / INT | 2 bytes | Little-endian | -32,768 to 32,767 |

| INT32 / DINT | 4 bytes | Little-endian | Indexed at offset × 4 |

| UINT16 / UINT | 2 bytes | Little-endian | 0 to 65,535 |

| UINT32 / UDINT | 4 bytes | Little-endian | Indexed at offset × 4 |

| REAL / FLOAT | 4 bytes | IEEE 754 | Indexed at offset × 4 |

A common gotcha: When reading 32-bit values, the element offset in the response buffer is index × 4 bytes from the start. For 16-bit values, it's index × 2. Getting this wrong silently produces garbage values that look plausible — a classic source of phantom sensor readings.

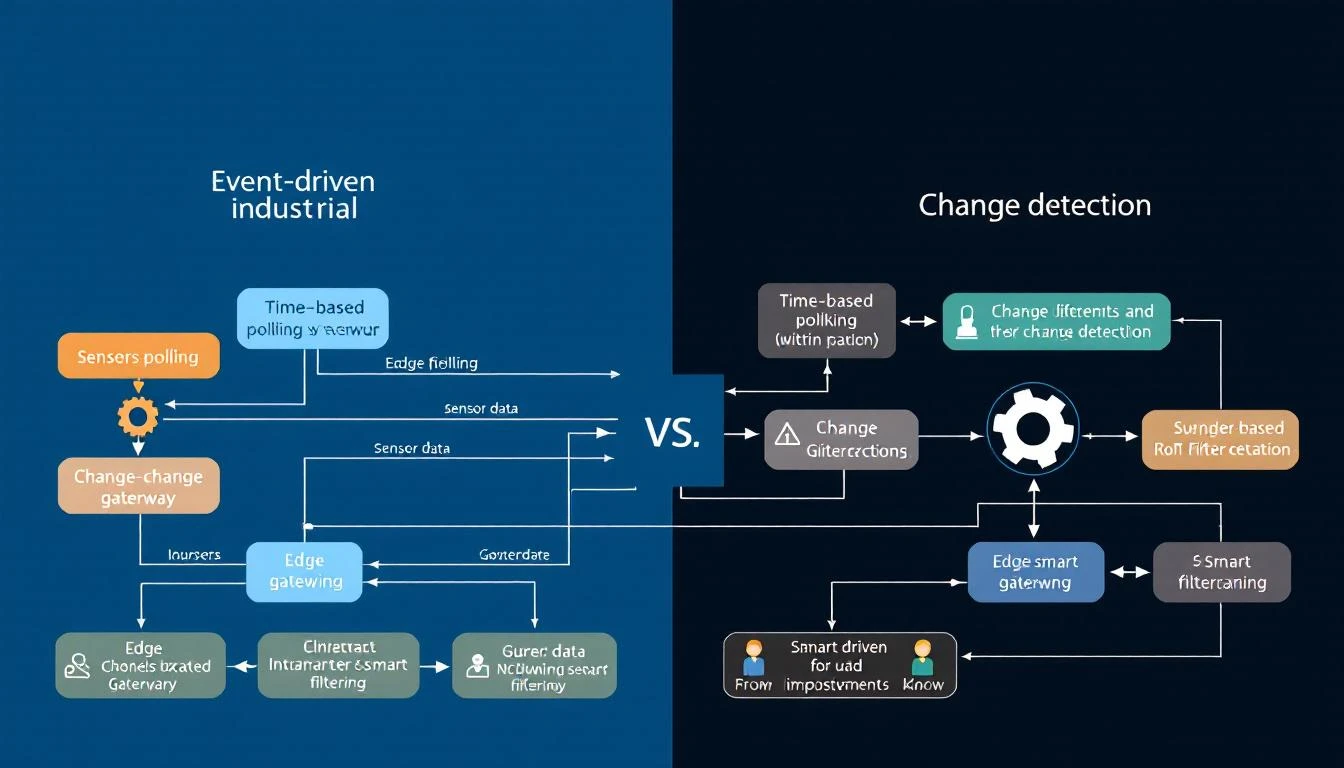

Practical Integration Pattern: Interval-Based Tag Reading

In production IIoT deployments, not every tag needs to be read at the same rate. A blender's running status might change once per shift, while a motor current needs 1-second resolution. A well-designed gateway implements per-tag interval scheduling:

Tag Configuration:

- blender_status: type=bool, interval=60s, compare=true

- motor_speed: type=float, interval=5s, compare=false

- temperature_sp: type=float, interval=10s, compare=true

- alarm_word: type=uint16, interval=1s, compare=true

The compare flag is crucial for bandwidth optimization. When enabled, the gateway only forwards a value to the cloud if it has changed since the last read. For boolean status tags that might stay constant for hours, this eliminates 99%+ of redundant transmissions.

Dependent Tag Chains

Some tags are only meaningful when a parent tag changes. For example, when a machine_state tag transitions from IDLE to RUNNING, you want to immediately read a cascade of operational tags (speed, temperature, pressure) regardless of their normal intervals.

This pattern — triggered reads on value change — dramatically reduces average bandwidth while ensuring you never miss the data that matters. The gateway maintains a dependency graph where certain tags trigger force-reads of their children.

Handling Connection Failures

EtherNet/IP connections fail. PLCs reboot. Network switches drop packets. A production-grade gateway implements:

- Retry with backoff — On read failure (typically error code -32 for connection timeout), retry up to 3 times before declaring the link down.

- Link state tracking — Maintain a boolean link state per device. Transition to DOWN on persistent failures; transition to UP on the first successful read. Deliver link state changes immediately (not batched) as they're high-priority events.

- Automatic reconnection — On link DOWN, destroy the existing connection context and attempt to re-establish. Don't just retry on the dead socket.

- Hourly forced reads — Even when using compare-based transmission, periodically force-read and deliver all tags. This prevents state drift where the gateway and cloud have different views of a value that changed during a brief disconnection.

Batching for MQTT Delivery

The gateway doesn't forward each tag value individually to the cloud. Instead, it implements a batch-and-forward pattern:

- Start a batch group with a timestamp

- Accumulate tag values (with ID, status, type, and value data)

- Close the group when either:

- The batch size exceeds the configured maximum (typically 4KB)

- The collection timeout expires (typically 60 seconds)

- Serialize the batch (JSON or binary) and push to an output buffer

- The output buffer handles MQTT QoS 1 delivery with page-based flow control

Binary serialization is preferred for bandwidth-constrained cellular connections. A typical binary batch frame:

Header: 0xF7 (command byte)

4 bytes: number of groups

Per group:

4 bytes: timestamp

2 bytes: device type

4 bytes: serial number

4 bytes: number of values

Per value:

2 bytes: tag ID

1 byte: status (0x00 = OK)

1 byte: array size

1 byte: element size (1, 2, or 4)

N bytes: packed data (MSB → LSB)

This binary format achieves roughly 3–5x compression over equivalent JSON, which matters when you're paying per-megabyte on cellular or satellite links.

Performance Benchmarks

Based on production deployments with Micro800 controllers:

| Scenario | Tags | Cycle Time | Bandwidth |

|---|---|---|---|

| All explicit, 1s interval | 50 | ~800ms | ~2KB/s JSON |

| All explicit, 5s interval | 100 | ~1200ms | ~1KB/s JSON |

| Mixed interval + compare | 100 | Varies | ~200B/s binary |

| Implicit I/O, 50ms RPI | 20 | 50ms fixed | ~4KB/s |

The "mixed interval + compare" row shows the power of intelligent scheduling — by reading fast-changing tags frequently and slow-changing tags infrequently, and only forwarding values that actually changed, you can monitor 100+ tags with less bandwidth than 20 tags on implicit I/O.

Common Pitfalls

1. Exhausting connection slots. Each Forward Open consumes a connection slot on the PLC. Open too many and you'll get "Connection Refused" errors. Pool your connections and reuse sessions.

2. Mismatched element sizes. If you request elem_size=4 but the tag is actually INT16, you'll read adjacent memory and get corrupted values. Always match element size to the tag's actual data type.

3. Ignoring the simulator trap. When testing with a PLC simulator, random values mask real issues like byte-ordering bugs and timeout handling. Test against real hardware before deploying.

4. Not handling -32 errors. Error code -32 from libplctag means "connection failed." Three consecutive -32s should trigger a full disconnect/reconnect cycle, not just a retry on the same broken connection.

5. Blocking on tag creation. Creating a tag handle (plc_tag_create) can block for the full timeout duration if the PLC is unreachable. Use appropriate timeouts (2000ms is a reasonable default) and handle negative return values.

How machineCDN Handles EtherNet/IP

machineCDN's edge gateway natively supports EtherNet/IP with the patterns described above: per-tag intervals, compare-based change detection, dependent tag chains, binary batch serialization, and store-and-forward buffering. When you connect a Micro800 or CompactLogix controller, the gateway auto-detects the protocol, reads device identity, and begins scheduled tag acquisition — no manual configuration of CIP class/instance/attribute paths required.

The platform handles the complexity of connection management, retry logic, and bandwidth optimization so your engineering team can focus on the data rather than the protocol plumbing.

Conclusion

EtherNet/IP is more than "Modbus over Ethernet." Its CIP object model provides a rich, typed, hierarchical data architecture. Understanding the difference between implicit and explicit messaging — and knowing when to use each — is the difference between a gateway that polls itself to death and one that efficiently scales to hundreds of tags across dozens of controllers.

The key takeaways:

- Use explicit messaging for configuration reads and tags with intervals > 100ms

- Use implicit messaging for high-frequency process data

- Implement per-tag intervals with compare flags to minimize bandwidth

- Design for failure with retry logic, link state tracking, and periodic forced reads

- Batch before sending — never forward individual tag values to the cloud

Master these patterns and you'll build IIoT integrations that run reliably for years, not demos that break in production.