PLC Alarm Decoding in IIoT: Byte Masking, Bit Fields, and Building Reliable Alarm Pipelines [2026]

Every machine on your plant floor generates alarms. Motor overtemp. Hopper empty. Pressure out of range. Conveyor jammed. These alarms exist as bits in PLC registers — compact, efficient, and completely opaque to anything outside the PLC unless you know how to decode them.

The challenge isn't reading the register. Any Modbus client can pull a 16-bit value from a holding register. The challenge is turning that 16-bit integer into meaningful alarm states — knowing that bit 3 means "high temperature warning" while bit 7 means "emergency stop active," and that some alarms span multiple registers using offset-and-byte-count encoding that doesn't map cleanly to simple bit flags.

This guide covers the real-world techniques for PLC alarm decoding in IIoT systems — the bit masking, the offset arithmetic, the edge detection, and the pipeline architecture that ensures no alarm gets lost between the PLC and your monitoring dashboard.

How PLCs Store Alarms

PLCs don't have alarm objects the way SCADA software does. They have registers — 16-bit integers that hold process data, configuration values, and yes, alarm states. The PLC programmer decides how alarms are encoded, and there are three common patterns.

Pattern 1: Single-Bit Alarms (One Bit Per Alarm)

The simplest and most common pattern. Each bit in a register represents one alarm:

Register 40100 (16-bit value: 0x0089 = 0000 0000 1000 1001)

Bit 0 (value 1): Motor Overload → ACTIVE ✓

Bit 1 (value 0): High Temperature → Clear

Bit 2 (value 0): Low Pressure → Clear

Bit 3 (value 1): Door Interlock Open → ACTIVE ✓

Bit 4 (value 0): Emergency Stop → Clear

Bit 5 (value 0): Communication Fault → Clear

Bit 6 (value 0): Vibration High → Clear

Bit 7 (value 1): Maintenance Due → ACTIVE ✓

Bits 8-15: (all 0) → Clear

To check if a specific alarm is active, you use bitwise AND with a mask:

is_active = (register_value >> bit_offset) & 1

For bit 3 (Door Interlock):

(0x0089 >> 3) & 1 = (0x0011) & 1 = 1 → ACTIVE

For bit 4 (Emergency Stop):

(0x0089 >> 4) & 1 = (0x0008) & 1 = 0 → Clear

This is clean and efficient. One register holds 16 alarms. Two registers hold 32. Most small PLCs can encode all their alarms in 2-4 registers.

Pattern 2: Multi-Bit Alarm Codes (Encoded Values)

Some PLCs use multiple bits to encode alarm severity or type. Instead of one bit per alarm, a group of bits represents an alarm code:

Register 40200 (value: 0x0034)

Bits 0-3: Feeder Status Code

0x0 = Normal

0x1 = Low material warning

0x2 = Empty hopper

0x3 = Jamming detected

0x4 = Motor fault

Bits 4-7: Dryer Status Code

0x0 = Normal

0x1 = Temperature deviation

0x2 = Dew point high

0x3 = Heater fault

To extract the feeder status:

feeder_code = register_value & 0x0F // mask lower 4 bits

dryer_code = (register_value >> 4) & 0x0F // shift right 4, mask lower 4

For value 0x0034:

feeder_code = 0x0034 & 0x0F = 0x04 → Motor fault

dryer_code = (0x0034 >> 4) & 0x0F = 0x03 → Heater fault

This pattern is more compact but harder to decode — you need to know both the bit offset AND the mask width (how many bits represent this alarm).

Pattern 3: Offset-Array Alarms

For machines with many alarm types — blenders with multiple hoppers, granulators with different zones, chillers with multiple pump circuits — the PLC programmer often uses an array structure where a single tag (register) holds multiple alarm values at different offsets:

Tag ID 5, Register 40300: Alarm Word

Read as an array of values: [value0, value1, value2, value3, ...]

Offset 0: Master alarm (1 = any alarm active)

Offset 1: Hopper 1 high temp

Offset 2: Hopper 1 low level

Offset 3: Hopper 2 high temp

Offset 4: Hopper 2 low level

...

In this pattern, the PLC transmits the register value as a JSON-encoded array (common with modern IIoT gateways). To check a specific alarm:

values = [0, 1, 0, 0, 1, 0, 0, 0]

is_hopper1_high_temp = values[1] // → 1 (ACTIVE)

is_hopper2_low_level = values[4] // → 1 (ACTIVE)

When offset is 0 and the byte count is also 0, you're looking at a simple scalar — the entire first value is the alarm state. When offset is non-zero, you index into the array. When the byte count is non-zero, you're doing bit masking on the scalar value:

if (bytes == 0 && offset == 0):

active = values[0] // Simple: first value is the state

elif (bytes == 0 && offset != 0):

active = values[offset] != 0 // Array: index by offset

elif (bytes != 0):

active = (values[0] >> offset) & bytes // Bit masking: shift and mask

This three-way decode logic is the core of real-world alarm processing. Miss any branch and you'll have phantom alarms or blind spots.

Building the Alarm Decode Pipeline

A reliable alarm pipeline has four stages: poll, decode, deduplicate, and notify.

Stage 1: Polling Alarm Registers

Alarm registers must be polled at a higher frequency than general telemetry. Process temperatures can be sampled every 5-10 seconds, but alarms need sub-second detection for safety-critical states.

The practical approach:

- Alarm registers: Poll every 1-2 seconds

- Process data registers: Poll every 5-10 seconds

- Configuration registers: Poll once at startup or on-demand

Group alarm-related tag IDs together so they're read in a single Modbus transaction. If your PLC stores alarm data across tags 5, 6, and 7, read all three in one poll cycle rather than three separate requests.

Stage 2: Decode Each Tag

For each alarm tag received, look up the alarm type definitions — a configuration that maps tag_id + offset + byte_count to an alarm name and decode method.

Example alarm type configuration:

| Alarm Name | Machine Type | Tag ID | Offset | Bytes | Unit |

|---|---|---|---|---|---|

| Motor Overload | Granulator | 5 | 0 | 0 | - |

| High Temperature | Granulator | 5 | 1 | 0 | °F |

| Vibration Warning | Granulator | 5 | 0 | 4 | - |

| Jam Detection | Granulator | 6 | 2 | 0 | - |

The decode logic for each row:

Motor Overload (tag 5, offset 0, bytes 0): active = values[0] — direct scalar

High Temperature (tag 5, offset 1, bytes 0): active = values[1] != 0 — array index

Vibration Warning (tag 5, offset 0, bytes 4): active = (values[0] >> 0) & 4 — bit mask at position 0 with mask width 4. This checks if the third bit (value 4 in decimal) is set in the raw alarm word.

Jam Detection (tag 6, offset 2, bytes 0): active = values[2] != 0 — array index on a different tag

Stage 3: Edge Detection and Deduplication

Raw alarm states are level-based — "the alarm IS active right now." But alarm notifications need to be edge-triggered — "the alarm JUST became active."

Without edge detection, every poll cycle generates a notification for every active alarm. A motor overload alarm that stays active for 30 minutes would generate 1,800 notifications at 1-second polling. Your operators will mute alerts within hours.

The edge detection approach:

previous_state = get_cached_state(device_id, alarm_type_id)

current_state = decode_alarm(tag_values, offset, bytes)

if current_state AND NOT previous_state:

trigger_alarm_activation(alarm)

elif NOT current_state AND previous_state:

trigger_alarm_clear(alarm)

cache_state(device_id, alarm_type_id, current_state)

Critical: The cached state must survive gateway restarts. Store it in persistent storage (file or embedded database), not just in memory. Otherwise, every reboot triggers a fresh wave of alarm notifications for all currently-active alarms.

Stage 4: Notification and Routing

Not all alarms are equal. A "maintenance due" flag shouldn't page the on-call engineer at 2 AM. A "motor overload on running machine" absolutely should.

Alarm routing rules:

| Severity | Response | Notification |

|---|---|---|

| Critical (E-stop, fire, safety) | Immediate shutdown | SMS + phone call + dashboard |

| High (equipment damage risk) | Operator attention needed | Push notification + dashboard |

| Medium (process deviation) | Investigate within shift | Dashboard + email digest |

| Low (maintenance, informational) | Schedule during downtime | Dashboard only |

The machine's running state matters for alarm priority. An active alarm on a stopped machine is informational. The same alarm on a running machine is critical. This context-aware prioritization requires correlating alarm data with the machine's operational state — the running tag, idle state, and whether the machine is in a planned downtime window.

Machine-Specific Alarm Patterns

Different machine types encode alarms differently. Here are patterns common across industrial equipment:

Blenders and Feeders

Blenders with multiple hoppers generate per-hopper alarms. A 6-hopper batch blender might have:

- Tags 1-6: Per-hopper weight/level values

- Tag 7: Alarm word with per-hopper fault bits

- Tag 8: Master alarm rollup

The number of active hoppers varies by recipe. A machine configured for 4 ingredients only uses hoppers 1-4. Alarms on hoppers 5-6 should be suppressed — they're not connected, and their registers contain stale data.

Discovery pattern: Read the "number of hoppers" or "ingredients configured" register first. Only decode alarms for hoppers 1 through N.

Temperature Control Units (TCUs)

TCUs have a unique alarm pattern: the alert tag is a single scalar where a non-zero value indicates any active alert. This is the simplest pattern — no bit masking, no offset arrays:

alert_tag_value = read_tag(tag_id=23)

if alert_tag_value[0] != 0:

alarm_active = True

This works because TCUs typically have their own built-in alarm logic. The IIoT gateway doesn't need to decode individual fault codes — the TCU has already determined that something is wrong. The gateway just needs to surface that to the operator.

Granulators and Heavy Equipment

Granulators and similar heavy-rotating-equipment tend to use the full three-pattern decode. They have:

- Simple scalar alarms (is the machine faulted? yes/no)

- Array-offset alarms (which specific fault zone is affected?)

- Bit-masked alarm words (which combination of faults is present?)

All three might exist simultaneously on the same machine, across different tags. Your decode logic must handle them all.

Common Pitfalls in Alarm Pipeline Design

1. Polling the Same Tag Multiple Times

If multiple alarm types reference the same tag_id, don't read the tag separately for each alarm. Read the tag once per poll cycle, then run all alarm type decoders against the cached value. This is especially important over Modbus RTU where every extra register read costs 40-50ms.

Group alarm types by their unique tag_ids:

unique_tags = distinct(tag_id for alarm_type in alarm_types)

for tag_id in unique_tags:

values = read_register(device, tag_id)

cache_values(device, tag_id, values)

for alarm_type in alarm_types:

values = get_cached_values(device, alarm_type.tag_id)

active = decode(values, alarm_type.offset, alarm_type.bytes)

2. Ignoring the Difference Between Alarm and Active Alarm

Many systems maintain two concepts:

- Alarm: A historical record of what happened and when

- Active Alarm: The current state, right now

Active alarms are tracked in real-time and cleared when the condition resolves. Historical alarms are never deleted — they form the audit trail.

A common mistake is treating the active alarm table as the alarm history. Active alarms should be a thin, frequently-updated state table. Historical alarms should be an append-only log with timestamps for activation, acknowledgment, and clearance.

3. Not Handling Stale Data

When a gateway loses communication with a PLC, the last-read register values persist in cache. If the alarm pipeline continues using these stale values, it won't detect new alarms or clear resolved ones.

Implement a staleness check:

- Track the timestamp of the last successful read per device

- If data is older than 2× the poll interval, mark all alarms for that device as "UNKNOWN" (not active, not clear — unknown)

- Display UNKNOWN state visually distinct from both ACTIVE and CLEAR on the dashboard

4. Timestamp Confusion

PLC registers don't carry timestamps. The timestamp is assigned by whatever reads the register — the edge gateway, the cloud API, or the SCADA system.

For alarm accuracy:

- Timestamp at the edge gateway, not in the cloud. Network latency can add seconds (or minutes during connectivity loss) between the actual alarm event and cloud receipt.

- Use the gateway's NTP-synchronized clock. PLCs don't have accurate clocks — some don't have clocks at all.

- Store timestamps in UTC. Convert to local time only at the display layer, using the machine's configured timezone.

5. Unit Conversion on Alarm Thresholds

If a PLC stores temperature in Fahrenheit and your alarm threshold logic operates in Celsius (or vice versa), every comparison is wrong. This happens more than you'd think in multi-vendor environments where some equipment uses imperial units and others use metric.

Normalize at the edge. Convert all values to SI units (Celsius, kilograms, meters, kPa) before applying alarm logic. This means your alarm thresholds are always in consistent units regardless of the source equipment.

Common conversions that trip people up:

- Weight/throughput: Imperial (lbs/hr) vs. metric (kg/hr). 1 lb = 0.4536 kg.

- Flow: GPM vs. LPM. 1 GPM = 3.785 LPM.

- Length: ft/min vs. m/min. 1 ft = 0.3048 m.

- Pressure delta: PSI to kPa — ÷0.145.

- Temperature delta: A 10°F delta ≠ a 10°C delta. Delta conversion:

ΔC = ΔF × 5/9.

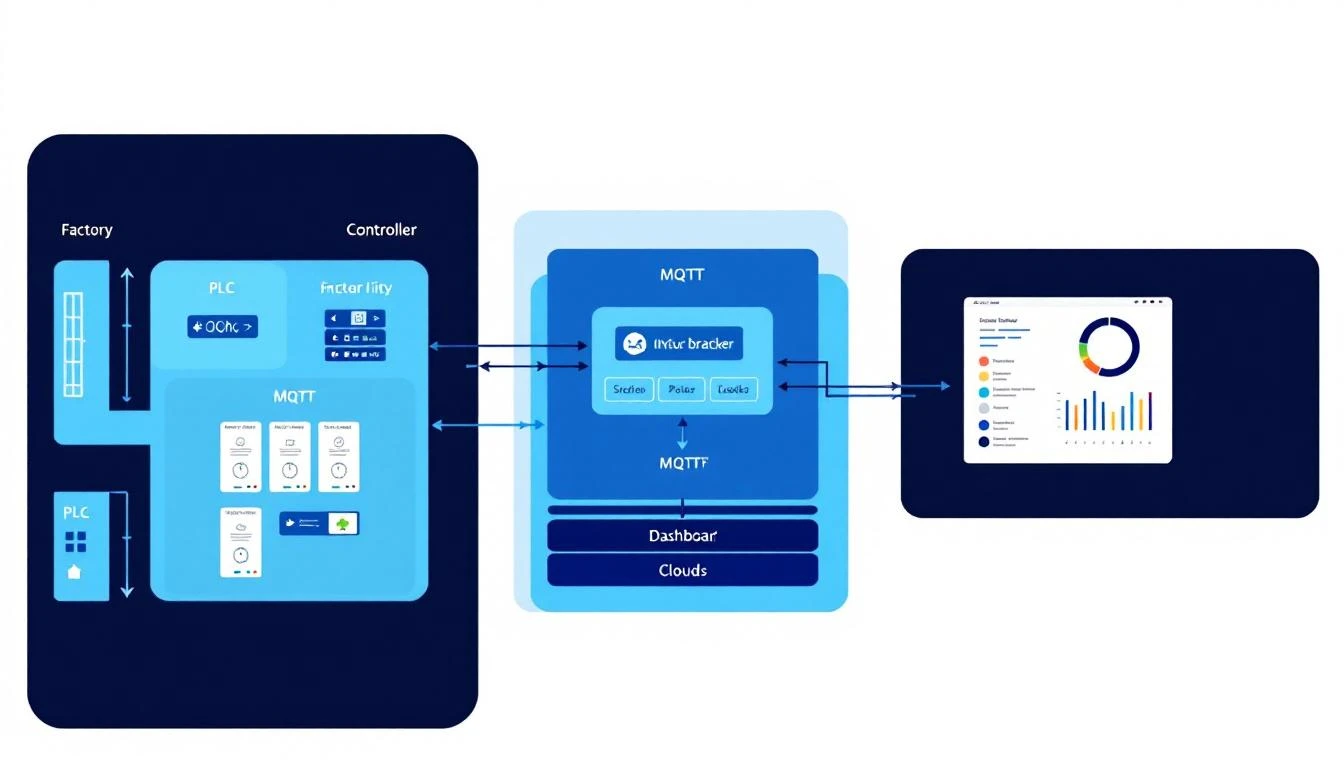

Architecture: From PLC Register to Dashboard Alert

The end-to-end alarm pipeline in a well-designed IIoT system:

PLC Register (bit field)

↓

Edge Gateway (poll + decode + edge detect)

↓

Local Buffer (persist if cloud is unreachable)

↓

Cloud Ingestion (batch upload with timestamps)

↓

Alarm Service (route + prioritize + notify)

↓

Dashboard / SMS / Email

The critical path: PLC → Gateway → Operator. Everything else (cloud storage, analytics, history) is important but secondary. If the cloud goes down, the gateway must still detect alarms, log them locally, and trigger local notifications (buzzer, light tower, SMS via cellular).

machineCDN implements this architecture with its edge gateway handling the decode and buffering layers, ensuring alarm data is never lost even during connectivity gaps. The gateway maintains PLC communication state, handles the three-pattern alarm decode natively, and batches alarm events for efficient cloud delivery.

Testing Your Alarm Pipeline

Before deploying to production, test every alarm path:

- Force each alarm in the PLC (using the PLC programming software) and verify it appears on the dashboard within your target latency

- Clear each alarm and verify the dashboard reflects the clear state

- Disconnect the PLC (pull the Ethernet cable or RS-485 connector) and verify alarms transition to UNKNOWN, not CLEAR

- Reconnect the PLC while alarms are active and verify they immediately show as ACTIVE without requiring a transition through CLEAR first

- Restart the gateway while alarms are active and verify no duplicate alarm notifications are generated

- Simulate cloud outage and verify alarms are buffered locally and delivered in order when connectivity returns

If any of these tests fail, your alarm pipeline has a gap. Fix it before your operators learn to ignore alerts.

Conclusion

PLC alarm decoding is unglamorous work — bit masking, offset arithmetic, edge detection. It's not the part of IIoT that makes it into the keynote slides. But it's the part that determines whether your monitoring system catches a motor overload at 2 AM or lets it burn out a $50,000 gearbox.

The three-pattern decode (scalar, array-offset, bit-mask) covers the vast majority of industrial equipment. Get this right at the edge gateway layer, add proper edge detection and staleness handling, and your alarm pipeline will be as reliable as the hardwired annunciators it's replacing.

machineCDN's edge gateway decodes alarm registers from any PLC — Modbus RTU or TCP — with configurable alarm type mappings, automatic edge detection, and store-and-forward buffering. No alarms lost, no false positives from stale data. See how it works →