Most IIoT deployments start the same way: poll every PLC register every second, serialize all values to JSON, and push everything to the cloud over MQTT. It works — until your cellular data bill arrives, or your broker starts choking on 500,000 messages per day from a single gateway, or you realize that 95% of those messages contain values that haven't changed since the last read.

The reality of industrial data is that most values don't change most of the time. A chiller's tank temperature drifts by a fraction of a degree per minute. A blender's motor state is "running" for 8 hours straight. A conveyor's alarm register reads zero all day — until the instant it doesn't, and that instant matters more than the previous 86,400 identical readings.

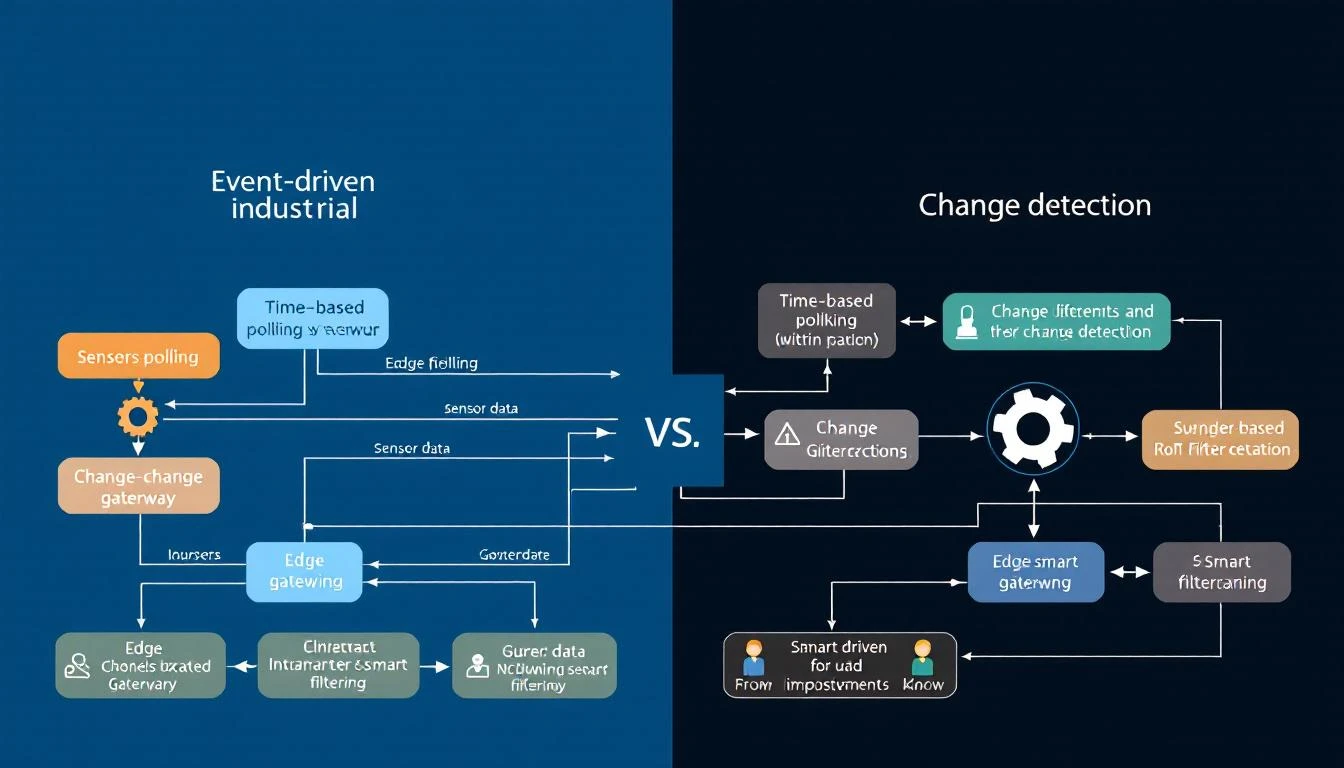

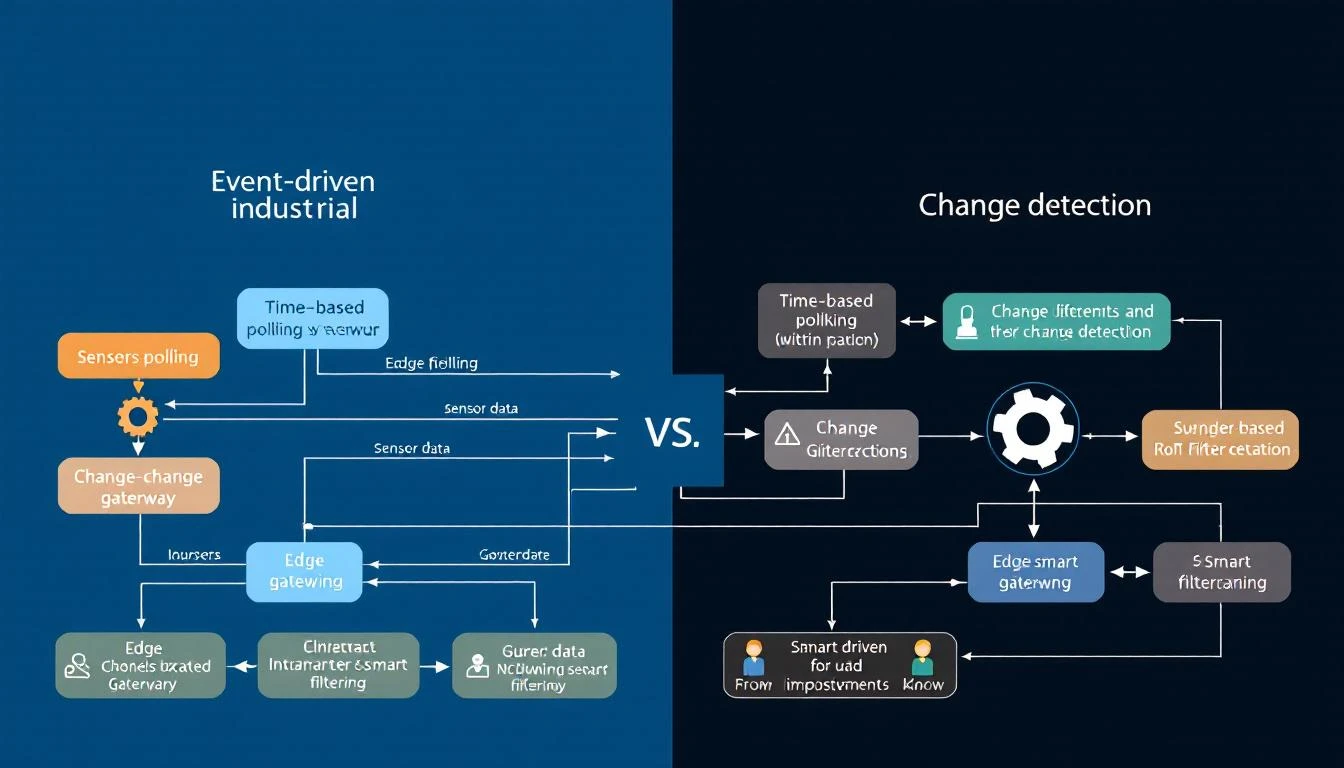

This guide covers a smarter approach: event-driven tag delivery, where the edge gateway reads at regular intervals but only transmits when something actually changes — and when something does change, it can trigger reads of related tags for complete context.

The Problem with Fixed-Interval Everything

Let's quantify the waste. Consider a typical industrial chiller with 10 compressor circuits, each exposing 16 process tags (temperatures, pressures, flow rates) and 3 alarm registers:

Tags per circuit: 16 process + 3 alarm = 19 tags

Total tags: 10 circuits × 19 = 190 tags

Poll interval: All at 1 second

At JSON format with timestamp, tag ID, and value, each data point is roughly 50 bytes. Per second, that's:

190 tags × 50 bytes = 9,500 bytes/second

= 570 KB/minute

= 34.2 MB/hour

= 821 MB/day

Over a cellular connection at $5/GB, that's $4.10/day per chiller — just for data that's overwhelmingly identical to what was sent one second ago.

Now let's separate the tags by their actual change frequency:

| Tag Type | Count | Actual Change Frequency | % of Total Data |

|---|

| Process temperatures | 100 | Every 30-60 seconds | 52.6% |

| Process pressures | 50 | Every 10-30 seconds | 26.3% |

| Flow rates | 10 | Every 5-15 seconds | 5.3% |

| Alarm bits | 30 | ~1-5 times per day | 15.8% |

Those 30 alarm registers — 15.8% of your data volume — change roughly 5 times per day. You're transmitting them 86,400 times. That's a 17,280x overhead on alarm data.

The Three Pillars of Event-Driven Delivery

A well-designed edge gateway implements three complementary strategies:

1. Compare-on-Read (Change Detection)

The simplest optimization: after reading a tag value from the PLC, compare it against the last transmitted value. If it hasn't changed, don't send it.

The implementation is straightforward:

def should_deliver(tag, new_value, new_status):

if not tag.has_been_read:

return True

if tag.last_status != new_status:

return True

if tag.compare_enabled:

if tag.last_value != new_value:

return True

return False

return True

Which tags should use change detection?

- Alarm/status registers: Always. These are event-driven by nature — you need the transitions, not the steady state.

- Digital I/O: Always. Binary values either changed or they didn't.

- Configuration registers: Always. Software version numbers, setpoints, and device parameters change rarely.

- Temperatures and pressures: Situational. If the process is stable, most readings are identical. But if you need trending data for analytics, you may want periodic delivery regardless.

- Counter registers: Never. Counters increment continuously — every reading is "different" — and you need the raw values for accurate rate calculations.

The gotcha with floating-point comparison: Comparing IEEE 754 floats for exact equality is unreliable due to rounding. For float-typed tags, use a deadband:

def float_changed(old_val, new_val, deadband=0.1):

return abs(new_val - old_val) > deadband

A temperature deadband of 0.1°F means you'll transmit when the temperature moves meaningfully, but ignore sensor noise.

2. Dependent Tags (Contextual Reads)

Here's where event-driven delivery gets powerful. Consider this scenario:

A chiller's compressor status word is a 16-bit register where each bit represents a different state: running, loaded, alarm, lockout, etc. You poll this register every second with change detection enabled. When bit 7 flips from 0 to 1 (alarm condition), you need more than just the status word — you need the discharge pressure, suction temperature, refrigerant level, and superheat at that exact moment to diagnose the alarm.

The solution: dependent tag chains. When a parent tag's value changes, the gateway immediately triggers a forced read of all dependent tags, delivering the complete snapshot:

Parent Tag: Compressor Status Word (polled every 1s, compare=true)

Dependent Tags:

├── Discharge Pressure (read only when status changes)

├── Suction Temperature (read only when status changes)

├── Refrigerant Liquid Temp (read only when status changes)

├── Superheat (read only when status changes)

└── Subcool (read only when status changes)

In normal operation, the gateway reads only the status word — one register per second per compressor. When the status word changes, it reads 6 registers total and delivers them as a single timestamped group. The result:

- Steady state: 1 register/second → 50 bytes/second

- Event triggered: 6 registers at once → 300 bytes (once, at the moment of change)

- vs. polling everything: 6 registers/second → 300 bytes/second (continuously)

Bandwidth savings: 99.8% during steady state, with zero data loss at the moment that matters.

Industrial PLCs often pack multiple boolean signals into a single 16-bit or 32-bit "status word" or "alarm word." Each bit has a specific meaning defined in the PLC program documentation:

Alarm Word (uint16):

Bit 0: High Temperature Alarm

Bit 1: Low Pressure Alarm

Bit 2: Flow Switch Fault

Bit 3: Motor Overload

Bit 4: Sensor Open Circuit

Bit 5: Communication Fault

Bits 6-15: Reserved

A naive approach reads the entire word and sends it to the cloud, leaving the bit-level parsing to the backend. A better approach: the edge gateway decomposes the word into individual boolean tags at read time.

The gateway reads the parent tag (the alarm word), and for each calculated tag, it applies a shift and mask operation to extract the individual bit:

Individual Alarm = (alarm_word >> bit_position) & mask

Each calculated tag gets its own change detection. So when Bit 2 (Flow Switch Fault) transitions from 0 to 1, the gateway transmits only that specific alarm — not the entire word, and not any unchanged bits.

Why this matters at scale: A 10-circuit chiller has 30 alarm registers (3 per circuit), each 16 bits wide. That's 480 individual alarm conditions. Without bit decomposition, a single bit flip in one register transmits all 30 registers (because the polling cycle doesn't know which register changed). With calculated tags, only the one changed boolean is transmitted.

Batching: Grouping Efficiency

Even with change detection, transmitting each changed tag as an individual MQTT message creates excessive overhead. MQTT headers, TLS framing, and TCP acknowledgments add 80-100 bytes of overhead per message. A 50-byte tag value in a 130-byte envelope is 62% overhead.

The solution: time-bounded batching. The gateway accumulates changed tag values into a batch, then transmits the batch when either:

- The batch reaches a size threshold (e.g., 4KB of accumulated data)

- A time limit expires (e.g., 10-30 seconds since the batch started collecting)

The batch structure groups values by timestamp:

{

"groups": [

{

"ts": 1709335200,

"device_type": 1018,

"serial_number": 2411001,

"values": [

{"id": 1, "values": [245]},

{"id": 6, "values": [187]},

{"id": 7, "values": [42]}

]

}

]

}

Critical exception: alarm tags bypass batching. When a status register changes, you don't want the alarm notification sitting in a batch buffer for 30 seconds. Alarm tags should be marked as do_not_batch — they're serialized and transmitted immediately as individual messages with QoS 1 delivery confirmation.

This creates a two-tier delivery system:

| Data Type | Delivery | Latency | Batching |

|---|

| Process values | Change-detected, batched | 10-30 seconds | Yes |

| Alarm/status bits | Change-detected, immediate | <1 second | No |

| Periodic values | Time-based, batched | 10-60 seconds | Yes |

Binary vs. JSON: The Encoding Decision

The batch payload format has a surprisingly large impact on bandwidth. Consider a batch with 50 tag values:

JSON format:

{"groups":[{"ts":1709335200,"device_type":1018,"serial_number":2411001,"values":[{"id":1,"values":[245]},{"id":2,"values":[187]},...]}]}

Typical size: 2,500-3,000 bytes for 50 values

Binary format:

Header: 1 byte (magic byte 0xF7)

Group count: 4 bytes

Per group:

Timestamp: 4 bytes

Device type: 2 bytes

Serial number: 4 bytes

Value count: 4 bytes

Per value:

Tag ID: 2 bytes

Status: 1 byte

Value count: 1 byte

Value size: 1 byte (1=bool/int8, 2=int16, 4=int32/float)

Values: 1-4 bytes each

Typical size: 400-600 bytes for 50 values

That's a 5-7x reduction — from 3KB to ~500 bytes per batch. Over cellular, this is transformative. A device that transmits 34 MB/day in JSON drops to 5-7 MB/day in binary, before even accounting for change detection.

The trade-off: binary payloads require a schema-aware decoder on the cloud side. Both the gateway and the backend must agree on the encoding format. In practice, most production IIoT platforms use binary encoding for device-to-cloud telemetry and JSON for cloud-to-device commands (where human readability matters and message volume is low).

The Hourly Reset: Catching Drift

One subtle problem with pure change detection: if a value drifts by tiny increments — each below the comparison threshold — the cloud's cached value can slowly diverge from reality. After hours of accumulated micro-drift, the dashboard shows 72.3°F while the actual temperature is 74.1°F.

The solution: periodic forced reads. Every hour (or at another configurable interval), the gateway resets all "read once" flags and forces a complete read of every tag, delivering all current values regardless of change. This acts as a synchronization pulse that corrects any accumulated drift and confirms that all devices are still online.

The hourly reset typically generates one large batch — a snapshot of all 190 tags — adding roughly 10-15KB once per hour. That's negligible compared to the savings from change detection during the other 3,599 seconds.

Quantifying the Savings

Let's revisit our 10-circuit chiller example with event-driven delivery:

Before (fixed interval, everything at 1s):

190 tags × 86,400 seconds × 50 bytes = 821 MB/day

After (event-driven with change detection):

Process values: 160 tags × avg 2 changes/min × 1440 min × 50 bytes = 23 MB/day

Alarm bits: 30 tags × avg 5 changes/day × 50 bytes = 7.5 KB/day

Hourly resets: 190 tags × 24 resets × 50 bytes = 228 KB/day

Overhead (headers, keepalives): ≈ 2 MB/day

──────────────────────────────────────────────────────

Total: ≈ 25.2 MB/day

With binary encoding instead of JSON:

≈ 25.2 MB/day ÷ 5.5 (binary compression) ≈ 4.6 MB/day

Net reduction: 821 MB → 4.6 MB = 99.4% bandwidth savings.

On a $5/GB cellular plan, that's $4.10/day → $0.02/day per chiller.

Implementation Checklist

If you're building or evaluating an edge gateway for event-driven tag delivery, here's what to look for:

How machineCDN Handles Event-Driven Delivery

machineCDN's edge gateway implements all of these strategies natively. Every tag in the device configuration carries its own polling interval, change detection flag, and batch/immediate delivery preference. Alarm registers are automatically configured for 1-second polling with change detection and immediate delivery. Process values use configurable intervals with batched transmission. The gateway supports both JSON and compact binary encoding, with automatic store-and-forward buffering that retains data through connectivity outages.

The result: plants running machineCDN gateways over cellular connections typically see 95-99% lower data volumes compared to naive fixed-interval polling — without losing a single alarm event or meaningful process change.

Tired of paying for the same unchanged data point 86,400 times a day? machineCDN delivers only the data that matters — alarms instantly, process values on change, with full periodic sync. See how much bandwidth you can save.