MQTT has become the dominant messaging protocol for Industrial IoT — and for good reason. It's lightweight enough to run on resource-constrained edge gateways, resilient enough to handle flaky cellular connections on remote sites, and flexible enough to carry everything from a single boolean alarm bit to a 500-tag batch payload from a production line.

But deploying MQTT in an industrial environment is fundamentally different from using it for consumer IoT. The stakes are higher, the data patterns are more complex, and getting the architecture wrong can mean lost production data or, worse, missed safety alarms.

This guide covers everything a plant engineer or controls integrator needs to know about running MQTT in production — from QoS level selection to broker architecture to the Sparkplug B specification that's finally bringing standardization to industrial MQTT payloads.

Why MQTT Won the Industrial IoT Protocol War

Before diving into the technical details, it's worth understanding why MQTT displaced so many competing approaches. Traditional industrial data collection relied on polling — a SCADA system or historian would periodically query PLCs via Modbus or OPC-DA, pulling register values on a fixed schedule.

This polling model has several problems at scale:

- Bandwidth waste: Most register values don't change between polls. A temperature sensor reading 72.4°F doesn't need to be transmitted every second if it hasn't moved.

- Latency on critical events: If a compressor fault fires 500ms after the last poll, you won't see it for another 500ms — or longer if the poll cycle is slow.

- Scaling headaches: Every additional client polling the same PLC adds load. With 20 systems all querying the same controller, you're burning CPU cycles on the PLC answering redundant requests.

MQTT inverts this model. Instead of clients pulling data, edge devices publish data when it changes (or on a configurable interval), and any number of subscribers can consume that data without adding load to the source device.

The key insight that makes this work in industrial settings is change-of-value detection combined with periodic heartbeats. A well-designed edge gateway will:

- Read PLC tags on a fast cycle (typically 1-second intervals for critical tags)

- Compare each reading against the last delivered value

- Only publish to MQTT when a value actually changes

- Still publish unchanged values periodically (hourly is common) to confirm the connection is alive

This approach dramatically reduces bandwidth — often by 80-90% compared to blind periodic polling — while actually reducing latency for state changes since they're published immediately rather than waiting for the next poll window.

QoS Levels: Why QoS 1 Is Almost Always the Right Choice

MQTT defines three Quality of Service levels, and choosing the right one is critical in industrial deployments:

QoS 0 — Fire and Forget

The broker delivers the message at most once, with no acknowledgment. If the subscriber is disconnected, the message is lost.

When to use it: Almost never in industrial settings. The only exception is high-frequency telemetry where individual samples are expendable — vibration data at 1kHz, for example, where losing a few samples in a burst doesn't affect the analysis.

QoS 1 — At Least Once Delivery

The broker guarantees delivery but may deliver duplicates. The publisher sends the message, waits for a PUBACK from the broker, and retransmits if the acknowledgment doesn't arrive within a timeout.

When to use it: This is the standard for industrial IoT. It guarantees your alarm states and production data reach the broker, and the duplicate delivery risk is easily handled by idempotent processing on the subscriber side (if you receive the same batch timestamp twice, just ignore the duplicate).

In practice, the "at least once" guarantee is exactly what you need for event-driven tag data. When a PLC tag transitions from false to true — say a compressor fault alarm — you need assurance that transition reaches the cloud. QoS 1 provides that assurance with minimal overhead.

QoS 2 — Exactly Once Delivery

A four-step handshake (PUBLISH → PUBREC → PUBREL → PUBCOMP) guarantees exactly-once delivery. The overhead is significant — roughly 2x the round trips of QoS 1.

When to use it: Rarely justified in IIoT. The scenarios where duplicate delivery actually causes problems (financial transactions, one-time commands) are uncommon on the factory floor. The extra latency and bandwidth are almost never worth the guarantee.

The QoS 1 + Idempotent Subscriber Pattern

The production-proven pattern for industrial MQTT looks like this:

Edge Gateway → MQTT Broker (QoS 1) → Cloud Subscriber

↓ ↓

Publish with Deduplicate by

message ID batch timestamp +

+ retry on device serial number

no PUBACK

Your edge device publishes each batch with a timestamp and a unique device identifier. On the subscriber side, you check whether you've already processed a message with that exact timestamp from that device. If yes, discard. If no, process and store.

This gives you effectively exactly-once semantics with QoS 1 performance.

Retained Messages and Last Will: The Industrial Essentials

Two MQTT features are particularly important for industrial deployments:

Retained Messages

When a message is published with the retained flag set, the broker stores the last message on that topic and delivers it immediately to any new subscriber. This is essential for device status.

Consider the scenario: your cloud dashboard reconnects after a network outage. Without retained messages, you have no idea whether 50 devices on the factory floor are online or offline until each one publishes its next status update. With retained messages on the status topic, the dashboard gets the current state of every device the instant it subscribes.

Best practice is to publish retained messages on status/heartbeat topics, but not on telemetry topics. You don't want a new subscriber to receive a stale temperature reading from 3 hours ago as if it were current.

Last Will and Testament (LWT)

When an MQTT client connects to the broker, it can register a "last will" message — a message the broker will automatically publish if the client disconnects ungracefully (network failure, power loss, crash).

For edge gateways, the LWT should publish a status message indicating the device is offline:

{

"cmd": "status",

"status": "offline",

"ts": 0

}

Combined with periodic status heartbeats (every 60 seconds is typical), this gives you a reliable presence detection system:

- Normal operation: Edge gateway publishes status every 60 seconds → subscribers know device is online

- Graceful shutdown: Edge gateway publishes "offline" status before disconnecting

- Crash/power loss: Broker publishes LWT "offline" message after keepalive timeout

The keepalive interval is critical here. Too short (under 30 seconds) and you'll get false offline detections from temporary network hiccups. Too long (over 120 seconds) and there's an unacceptable delay between device failure and detection. 60 seconds is the sweet spot for most industrial deployments.

Sparkplug B: Standardizing Industrial MQTT Payloads

The biggest challenge with raw MQTT in industrial settings has always been payload format. MQTT is transport-agnostic — it doesn't care whether you're sending JSON, binary, Protobuf, or plain text. This flexibility is a double-edged sword.

Without a standard, every integration becomes bespoke. One vendor sends JSON with camelCase keys, another uses snake_case, a third sends raw binary with a custom header format. Your cloud platform needs custom parsers for each.

Sparkplug B (now an Eclipse Foundation specification) solves this by defining:

- Topic namespace:

spBv1.0/{group_id}/{message_type}/{edge_node_id}/{device_id}

- Payload format: Google Protocol Buffers (Protobuf) with a defined schema

- State management: Birth/death certificates, metric definitions, and state machines

- Data types: Boolean, integer (8/16/32/64 bit signed and unsigned), float, double, string, bytes, datetime

The Sparkplug State Machine

Sparkplug introduces a formal state machine for edge nodes and devices:

┌─────────┐

Power On ──────►│ OFFLINE │

└────┬────┘

│ NBIRTH published

▼

┌─────────┐

│ ONLINE │◄──── NDATA published

└────┬────┘ (periodic updates)

│

┌──────────┼──────────┐

│ │ │

Lost Conn NDEATH Broker

(LWT fires) published restart

│ │ │

▼ ▼ ▼

┌─────────┐

│ OFFLINE │──── Reconnect ────► NBIRTH

└─────────┘

The birth certificate (NBIRTH) contains the complete metric definition for the edge node — every tag name, data type, and current value. This means a new subscriber can immediately understand the full data model without any out-of-band configuration.

Why Sparkplug B Matters for Scale

If you're connecting 5 devices to a single cloud platform, the payload format barely matters. At 500 or 5,000 devices across multiple sites, standardization becomes critical.

Sparkplug's use of Protobuf also provides significant bandwidth savings over JSON. A typical 50-tag batch that might be 2-3KB in JSON compresses to 400-600 bytes in Sparkplug Protobuf format — a 4-5x reduction that matters when you're pushing data over cellular connections with per-MB pricing.

Broker Architecture for Industrial Deployments

The MQTT broker is the single most critical component in your IIoT data pipeline. Every message flows through it, and if it goes down, your entire data collection stops.

Single Broker vs. Broker Cluster

For a single-site deployment with under 100 devices, a single broker instance (Mosquitto, HiveMQ, EMQX) on a dedicated VM is sufficient. Mosquitto can comfortably handle 10,000+ concurrent connections and 50,000+ messages/second on modest hardware (2 cores, 4GB RAM).

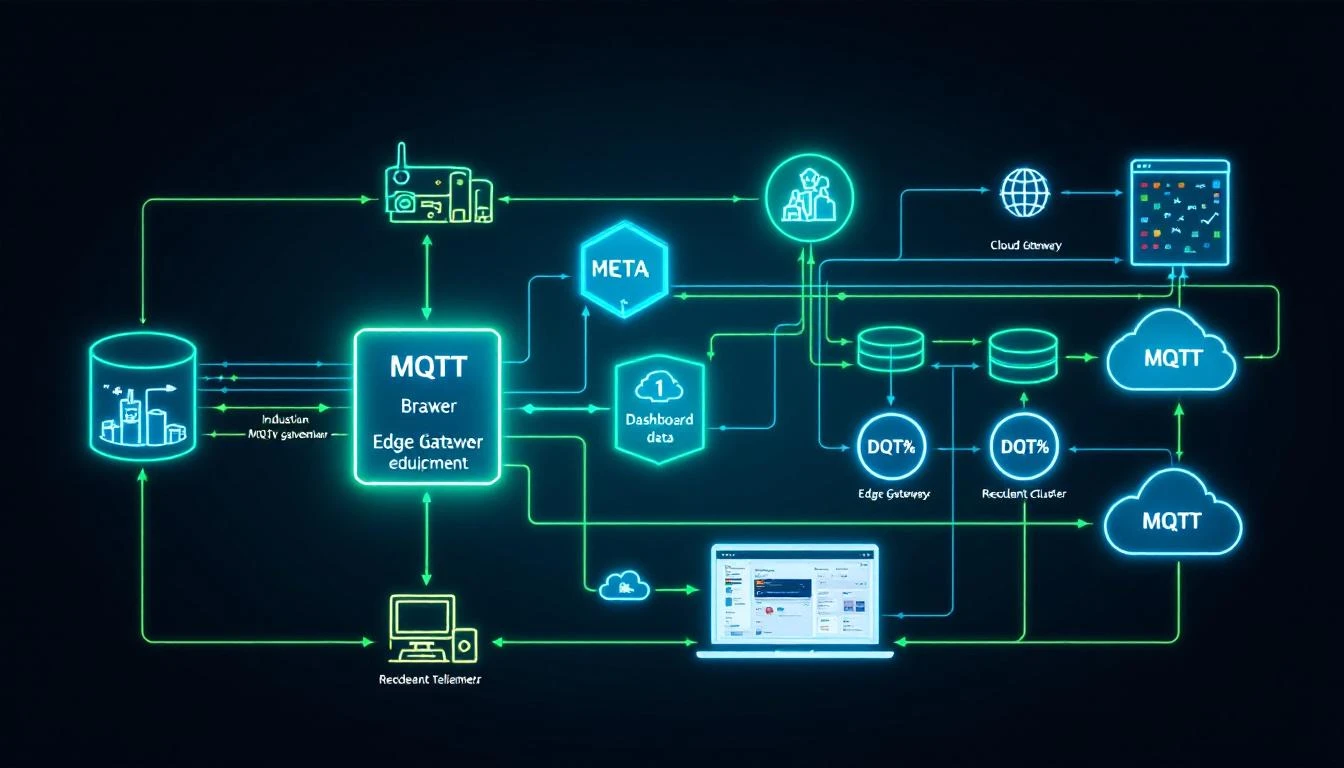

For multi-site or high-availability deployments, you need a clustered broker:

Site A Edge Gateways ──► Local Broker ──► Cloud Broker Cluster

│ (3-node minimum)

│ │

Site B Edge Gateways ──► Local Broker ──────────► │

▼

Cloud Subscribers

(Dashboards, Analytics,

Historians, Alerting)

The local broker pattern is important: each site runs its own MQTT broker, which bridges to the cloud cluster. This provides:

- Store-and-forward: If the WAN connection drops, the local broker queues messages and delivers them when connectivity returns

- Local subscribers: Site-level dashboards and alarm systems can subscribe to the local broker with sub-millisecond latency

- Reduced WAN traffic: The local broker can aggregate and compress data before forwarding

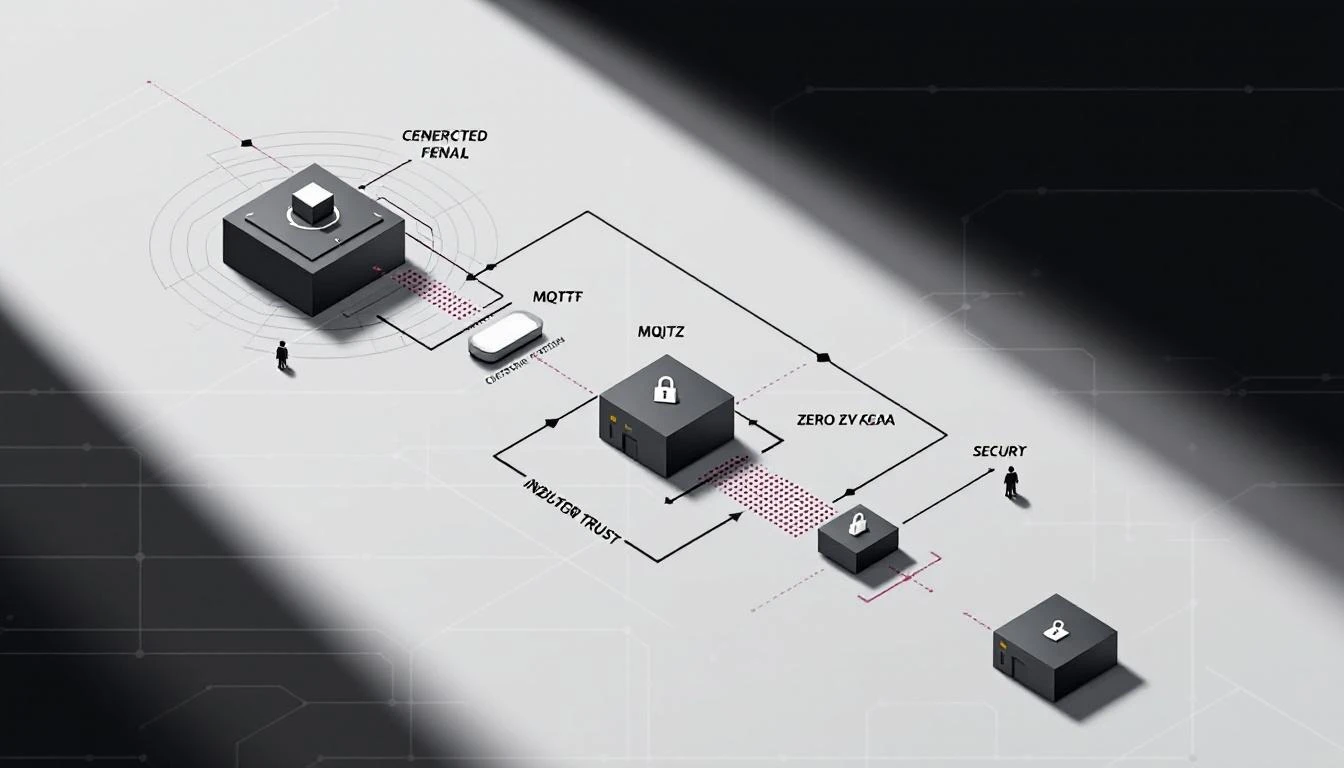

TLS Configuration for Industrial MQTT

MQTT over TLS (port 8883) is non-negotiable for any production deployment. The configuration details matter:

-

Certificate management: Use device-specific certificates, not shared keys. Each edge gateway should have its own client certificate signed by your CA. When a device is decommissioned, revoke its certificate without affecting the rest of the fleet.

-

Protocol version: TLS 1.2 minimum. TLS 1.3 preferred where both client and broker support it.

-

Certificate rotation: Plan for certificate expiry. In industrial environments, devices may run for years. Set certificate validity to 2-5 years and implement a rotation mechanism (OPC-UA has built-in certificate management; for MQTT, you'll need a custom solution or a device management platform).

-

Token expiry monitoring: If you're using SAS tokens (common with Azure IoT Hub), monitor the expiry timestamp. An expired token means silent disconnection — your edge gateway will fail to reconnect and you won't get an error unless you're checking. Best practice: compare the token's se (expiry) timestamp against current system time on every connection attempt and log a warning when within 7 days of expiry.

Connection Resilience

Industrial networks are unreliable. Cellular connections drop, site VPNs flap, firewalls time out idle connections. Your MQTT client implementation must handle all of these gracefully:

-

Automatic reconnection: Use mosquitto_reconnect_delay_set() or equivalent to configure exponential backoff. A fixed 5-second retry is appropriate for most industrial deployments — fast enough to recover quickly but not so aggressive that it hammers the broker during extended outages.

-

Asynchronous connection: Never block the main data collection loop waiting for MQTT to connect. Run the connection process in a background thread so PLC tag reading continues even when MQTT is down. Buffer the data locally and deliver it when connectivity returns.

-

Clean session = false: Set clean_session to false (MQTT 3.1.1) or use persistent sessions (MQTT 5.0) so the broker maintains your subscription state across reconnections. This prevents missing messages during brief disconnections.

Batching: The Performance Multiplier Nobody Talks About

One of the most impactful optimizations for industrial MQTT is intelligent batching — grouping multiple tag values into a single MQTT publish rather than publishing each tag individually.

Why Batching Matters

Consider a device with 100 tags, all updating every second. Without batching, that's 100 MQTT publishes per second — 100 TCP round trips, 100 broker message handling operations, 100 subscriber deliveries.

With batching, you group all tags that changed in the same read cycle into a single message. The structure typically looks like:

{

"cmd": "data",

"ts": 1709136000,

"sn": 16842753,

"type": 1017,

"groups": [

{

"ts": 1709136000,

"values": [

[1, 0, 0, 0, [1]],

[80, 0, 0, 0, [724]],

[82, 0, 0, 0, [185]]

]

}

]

}

Each value entry carries the tag ID, status, and value(s) — compact enough that 50 tags fit in under 1KB. The result: 1 MQTT publish per second instead of 100, with identical data delivered.

Batch Size and Timeout Tuning

Two parameters control batching behavior:

-

Max batch size (bytes): The maximum payload size before the batch is flushed. 500KB is a reasonable upper limit — large enough to hold hundreds of tags but small enough to avoid memory pressure on constrained edge hardware.

-

Batch timeout (seconds): The maximum time a batch can be held open before flushing, regardless of size. This ensures low-frequency data gets delivered promptly. 5-10 seconds is typical.

The Exception: Critical Alarms

Not every tag should be batched. Safety-critical alarms — compressor faults, high-pressure switches, flow switch failures — should bypass the batch entirely and be published immediately as individual messages.

The pattern: tag your alarm points with a "do not batch" flag. When these tags change value, publish them immediately via a direct MQTT publish, bypassing the batching layer. The latency difference between a batched delivery (up to 10 seconds) and a direct publish (under 100ms) can be the difference between catching a fault early and a costly shutdown.

Binary vs. JSON Payloads: The Bandwidth Tradeoff

For industrial MQTT, you have two practical payload format choices:

JSON

- Pros: Human-readable, easy to debug, universally parsed

- Cons: Verbose, ~3-5x larger than binary equivalents

- Best for: Development, debugging, small deployments, or when bandwidth isn't a concern

Binary (Custom or Protobuf)

- Pros: Compact (often 4-5x smaller than JSON), faster to serialize/deserialize

- Cons: Requires schema documentation, harder to debug

- Best for: Production deployments with cellular connectivity, large tag counts, or bandwidth-constrained environments

A well-designed binary format packs each tag value into a fixed-width structure: 2 bytes for tag ID, 1 byte for status, 1 byte for type, and 2-4 bytes for the value. A 50-tag batch becomes ~300 bytes instead of 2-3KB in JSON.

The practical recommendation: start with JSON during development and commissioning (the ability to read raw payloads in a debug tool is invaluable), then switch to binary for production when bandwidth matters.

Store-and-Forward: Don't Lose Data During Outages

The most common failure mode in industrial MQTT is losing data during connectivity outages. The edge gateway reads values from PLCs, tries to publish to MQTT, fails because the broker is unreachable, and... drops the data.

A production-grade edge gateway needs a local buffer that stores data when MQTT is disconnected and delivers it in order when connectivity returns.

The buffer architecture should:

- Pre-allocate memory: Don't dynamically allocate during operation. Pre-allocate a fixed buffer (512KB to 8MB depending on available RAM) and divide it into fixed-size pages.

- Use a page-based queue: Data flows into a "work page" until it's full, then the page moves to a "ready" queue. When MQTT is connected, pages are transmitted in order.

- Handle overflow gracefully: When the buffer is full and new data arrives, overwrite the oldest undelivered page (not the newest). In an extended outage, you want the most recent data, not the oldest.

- Track delivery confirmation: Don't free a buffer page until the MQTT PUBACK confirms the broker received it. If the connection drops mid-delivery, the page stays in the queue for retry.

This architecture ensures zero data loss during outages of minutes to hours (depending on buffer size and data rate) without any disk I/O — critical for edge devices running on flash storage where write endurance is a concern.

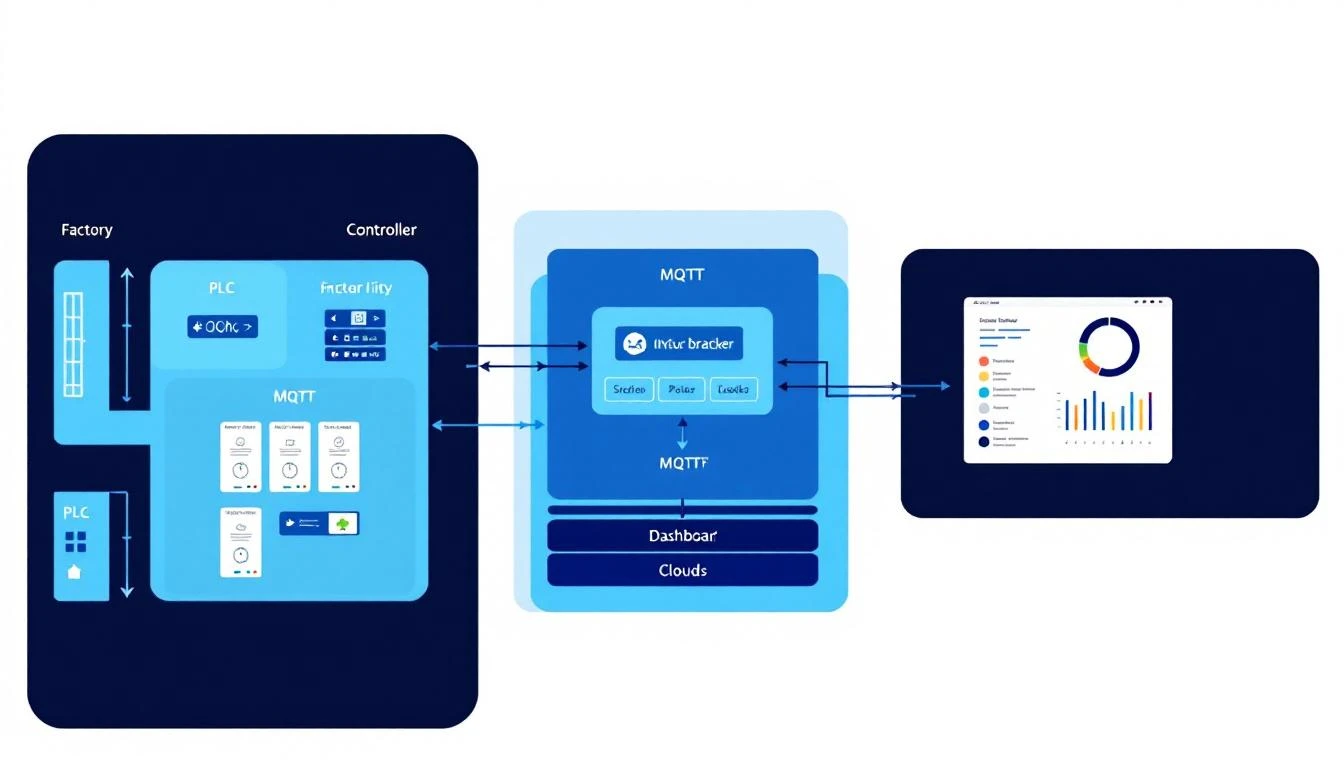

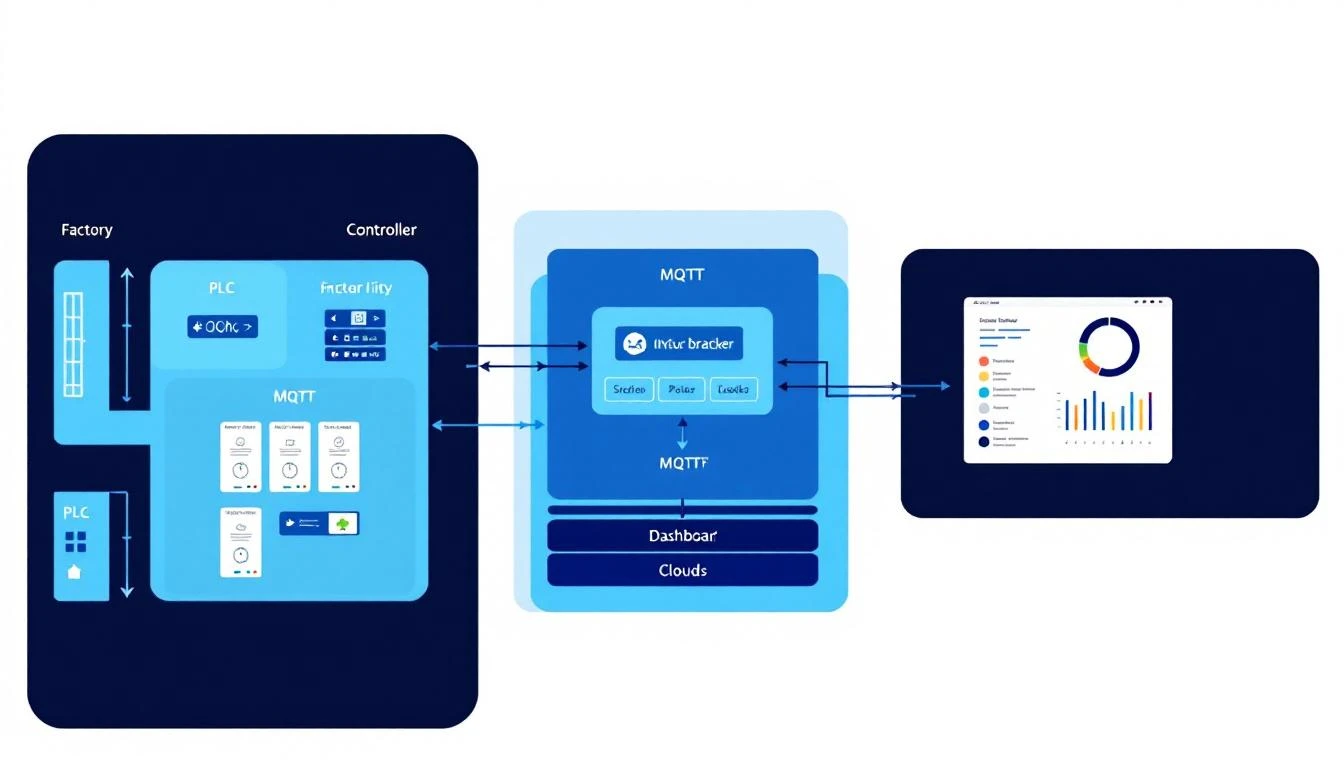

How machineCDN Handles Industrial MQTT

machineCDN's edge infrastructure implements all of the patterns described above. The edge gateway handles multi-protocol tag reading (Modbus RTU, Modbus TCP, EtherNet/IP), intelligent batching with change-of-value detection, and resilient MQTT delivery with a page-based store-and-forward buffer.

The platform supports both JSON and binary payload formats, configurable per device. Critical alarm tags can be flagged for immediate delivery, bypassing the batch. And the MQTT connection layer handles automatic reconnection with proper keepalive management — including SAS token expiry monitoring for Azure IoT Hub deployments.

For teams deploying MQTT in industrial environments, the combination of protocol-native tag reading and production-grade MQTT delivery eliminates the most common integration pitfalls — and lets engineers focus on the process data rather than the plumbing.

Key Takeaways

- Use QoS 1 with idempotent subscribers — it's the right balance for industrial data

- Implement change-of-value detection at the edge to reduce bandwidth by 80-90%

- Batch tag values into single publishes, but bypass the batch for critical alarms

- Build a store-and-forward buffer that pre-allocates memory and tracks delivery confirmation

- Use TLS with device-specific certificates — shared keys are a security liability at scale

- Deploy local brokers at each site to provide resilience and local subscriptions

- Consider Sparkplug B if you're connecting devices from multiple vendors or scaling past 100 endpoints

- Monitor connection health actively — check keepalive timers, token expiry, and buffer utilization

MQTT is not just a protocol choice — it's an architecture decision. Get the broker topology, QoS level, and buffering strategy right, and you'll have a data pipeline that's resilient enough for real industrial operations.